- The Quiet Hardware Shift You Might Have Missed

- Why NPUs Matter in Your Daily Use

- Where GPUs Still Dominate (And Will Keep Dominating)

- Why “NPU vs GPU” Misses the Point

- The Experience Shift: AI Feels Native

- What This Means for Your Next Purchase

- The Bigger Trend: AI as Infrastructure

- The Bottom Line

- more on tech / ai

By 2026, a surprising amount of artificial intelligence runs directly on your device. Live captions appear instantly. Voice-to-text feels immediate. Photo edits happen without uploading. Even compact language models operate locally.

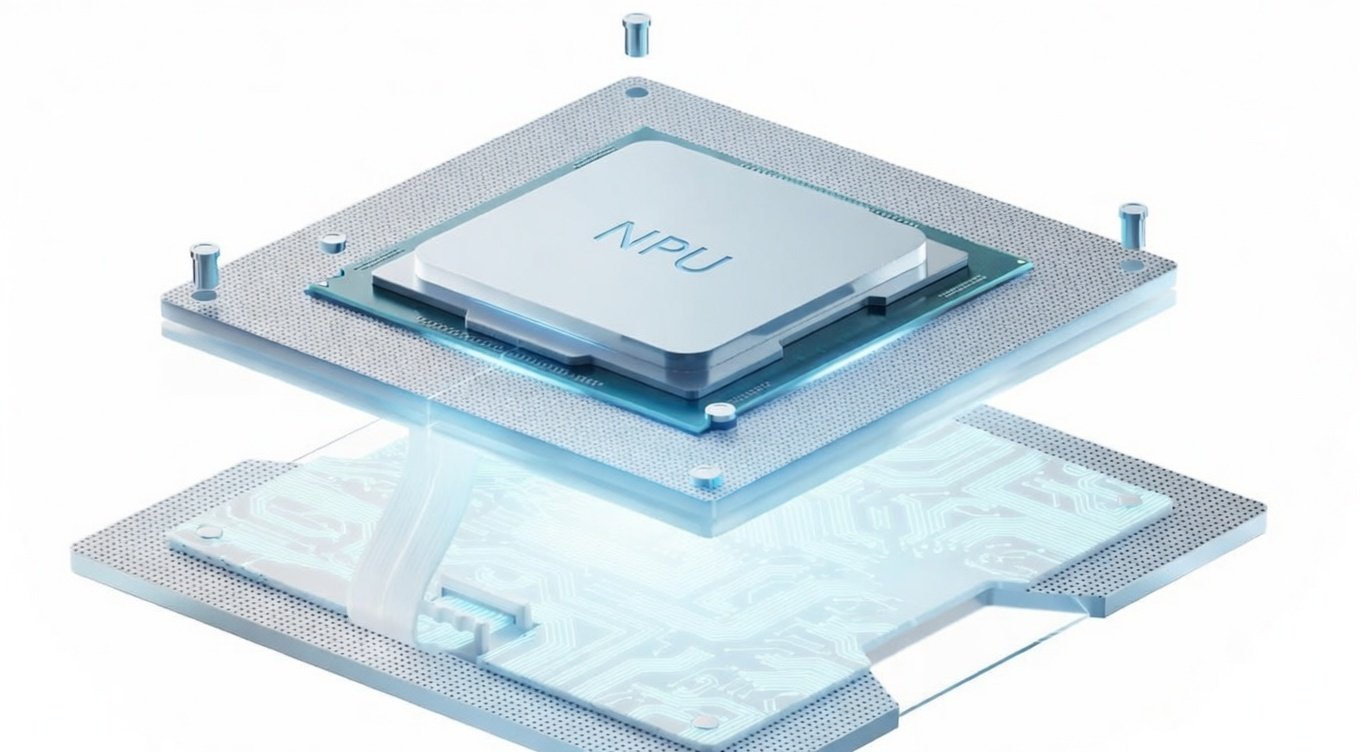

What’s making this possible isn’t just better software—it’s a hardware evolution. Specifically, the rise of Neural Processing Units (NPUs) working alongside the familiar Graphics Processing Units (GPUs).

Despite the “NPU vs GPU” comparisons you might see, the real story isn’t about rivalry. It’s about specialization.

The Quiet Hardware Shift You Might Have Missed

For years, GPUs powered accelerated computing. Originally designed for graphics, their parallel architecture made them surprisingly effective for AI workloads—much of deep learning’s growth was built on GPUs.

But GPUs weren’t designed exclusively for AI. They’re powerful, flexible, and fast—but can be power-hungry for small, continuous AI tasks. Using a GPU for lightweight inference is like revving a sports car at a stoplight.

Enter NPUs.

Built for efficiency, NPUs specialize in tensor math, matrix operations, and low-precision calculations—exactly what modern neural networks use. The result isn’t a dramatic visual change, but a noticeable experience upgrade: devices feel more responsive, capable, and less cloud-dependent.

Why NPUs Matter in Your Daily Use

The biggest advantage isn’t raw speed—it’s efficiency.

Many everyday AI features are continuous, low-latency tasks:

- Noise suppression during calls

- Real-time captions & translations

- Background blur & camera enhancements

- Voice assistants & smart system features

These workloads benefit from sustained acceleration, not short bursts of power. An NPU handles them using a fraction of the energy a GPU would require—less heat, less battery drain, less fan noise.

That’s why modern laptops can run AI features quietly in the background without killing battery life. It’s also why smartphones increasingly handle tasks locally that once required cloud processing.

Where GPUs Still Dominate (And Will Keep Dominating)

None of this makes GPUs obsolete—far from it.

GPUs remain unmatched for heavy parallel workloads:

- High-resolution image & video generation

- AI-assisted video editing and upscaling

- 3D rendering & large-scale simulations

- Model training & fine-tuning

- Gaming & real-time graphics pipelines

When a task needs to move vast volumes of data quickly, GPUs are still the natural fit. They’re designed for performance-intensive bursts.

If NPUs excel at endurance, GPUs excel at intensity.

Why “NPU vs GPU” Misses the Point

Devices in 2026 increasingly rely on a hybrid strategy, not a winner-takes-all approach.

- NPUs handle the steady stream of AI enhancements shaping modern user experiences

- GPUs handle computational spikes—image generation, video processing, rendering, and larger models

This division of labor is subtle but impactful. Your device feels “smarter” not because one component replaced another, but because workloads are now routed to the best hardware for the job.

The Experience Shift: AI Feels Native

Perhaps the most noticeable change is psychological. AI features no longer feel like remote services—they feel built-in.

Commands respond instantly. Edits happen locally. Tools work offline.

The friction introduced by network dependency—latency, loading indicators, upload delays—continues to fade. For users, the improvement often registers simply as:

“This device feels faster.”

Underneath that perception lies real architectural progress.

What This Means for Your Next Purchase

Specifications in 2026 require slightly different interpretation.

Traditional metrics like CPU speed and GPU tiers still matter, but buyers increasingly encounter new language:

- TOPS ratings (Trillions of Operations Per Second)

- Dedicated AI engines / NPUs

- On-device inference capabilities

For most users prioritizing portability, battery endurance, and everyday responsiveness, strong NPU performance has become meaningful.

For creators, gamers, and AI experimenters, GPU capability remains central.

Most premium devices now aim to balance both.

The Bigger Trend: AI as Infrastructure

This shift isn’t about NPUs replacing GPUs. It’s about AI becoming a foundational computing layer rather than a feature bolted onto software.

Acceleration is now workload-specific. Efficiency is prioritized alongside performance. Cloud processing remains important—but no longer mandatory for routine intelligence.

The Bottom Line

There won’t be a single dramatic “AI hardware moment.” Instead, devices will simply keep improving—responding faster, lasting longer, and feeling more capable.

Most users won’t need to know why.

Behind the scenes, NPUs and GPUs aren’t fighting for dominance. They’re dividing responsibilities—and your devices are better for it.

Ready to experience smarter AI on your device?

When shopping, look beyond GHz and core counts. Check for dedicated AI silicon (NPUs) alongside balanced GPU performance that matches how you actually use your laptop.

What AI feature do you wish worked offline? Let us know in the comments.