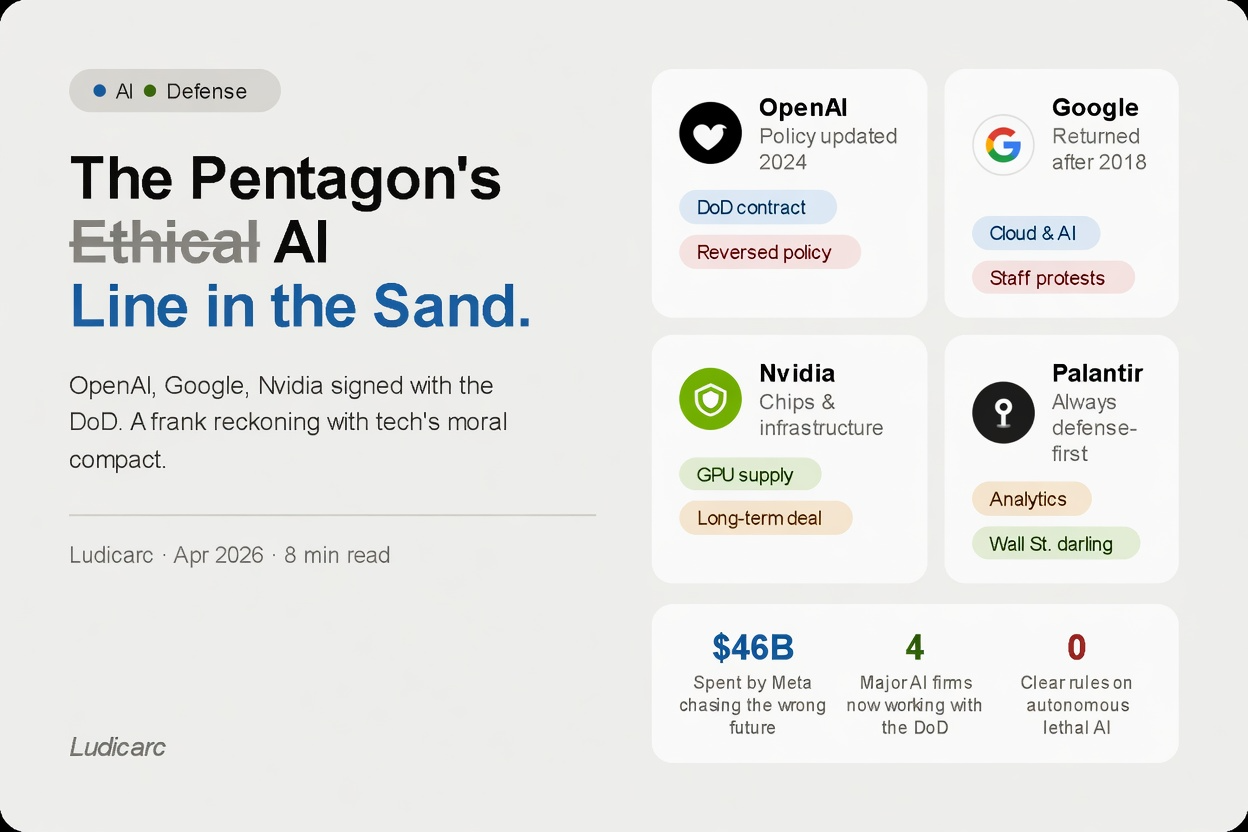

OpenAI, Google, and Nvidia are now working with the US military. Here’s what that actually means — and why it’s more complicated than it looks.

4

Major AI firms now formally working with the DoD

2018

When Google first pulled out after employee protests

0

Clear global rules on autonomous lethal AI systems

There’s a question that’s been quietly making its way through the tech world over the past couple of years. It doesn’t come up in product launches or earnings calls. It rarely makes the front page. But it sits underneath a lot of conversations happening inside the biggest technology companies on the planet. Should we be doing this?

“This” refers to a series of deals, contracts, and policy changes that have seen some of the most powerful AI companies in the world formally align themselves with the United States Department of Defense. OpenAI updated its terms of service to explicitly allow military use of its technology. Google — which cancelled a Pentagon AI contract in 2018 after thousands of employees protested — quietly came back. Nvidia, whose chips are the backbone of almost every serious AI system running today, has been supplying the US military for years. Palantir, always the most openly defense-friendly of the group, is now one of the most talked-about AI companies on Wall Street.

These aren’t rumours. The contracts are real, the money is real, and the technology is real. What’s less clear is what it all means — and whether anyone has really thought through where it ends up.

What changed and when

To understand why this moment matters, it helps to go back to 2018. Google was working on something called Project Maven — a Pentagon program that used AI to analyse drone footage. When employees found out, more than three thousand of them signed an open letter demanding the company pull out. Google withdrew.

It was a remarkable thing: workers at one of the world’s most powerful corporations successfully pushing back on a major business decision on moral grounds. For a few years, that moment felt like a line in the sand. Several major AI companies publicly stated they would not build weapons systems or work directly with military clients.

By 2023 and 2024, the mood had shifted. OpenAI quietly removed language from its usage policies that had previously prohibited military applications. Google returned to defense contracting. The justifications were more geopolitical this time — and harder to dismiss.

The argument for it

The case these companies make goes roughly like this: AI is going to be used in military contexts regardless of whether Silicon Valley participates. China is developing military AI aggressively. Russia is doing the same. The technology exists and the incentives are enormous.

If the US military is going to use AI either way, isn’t it better built by companies that take responsible development seriously — rather than ceding the field entirely?

There’s also a more specific version around what AI can actually do: better logistics, faster intelligence analysis, more precise targeting that reduces civilian casualties, stronger cybersecurity. The argument is that AI making these things more accurate saves lives, including civilian ones.

The argument against it

None of that fully resolves the discomfort — and the discomfort is worth taking seriously too. The most fundamental concern is about autonomous weapons: systems that can identify and engage targets without a human explicitly making the decision to fire. Early versions exist today, and every major military is developing more sophisticated ones.

Accountability is the second big problem. When a human soldier makes a decision that results in civilian deaths, there are processes — imperfect, frequently failing, but existing — for assigning responsibility. When an AI system makes the same decision, who is responsible? The company? The engineer? The general who deployed it? Right now the honest answer is: nobody clearly. That’s a genuinely dangerous gap.

OpenAI

Removed military ban from usage policy in 2024

Returned to DoD work after 2018 walkout

Nvidia

GPU chips powering US military AI systems

Palantir

Always defense-first; now a Wall St. darling

What happened to the people who said no

In 2018, the employees won. That almost never happens. Workers at a major corporation pushed back on a significant and lucrative government contract on moral grounds, and the company listened. It was a genuine moment of accountability.

What’s notable about the current period is that internal dissent, while still present, has been much quieter. There have been resignations, protests, and open letters — most notably at Google in 2024. But the scale is different. The slow normalisation of things that once felt uncomfortable is how moral lines move. Not through a single dramatic decision, but through a series of small, individually justifiable steps that add up to somewhere nobody quite decided to go.

What this has to do with everyday people

If you’ve used ChatGPT, if your phone has a Snapdragon processor, if you use Google Search — you’re already participating in the same technology ecosystem that is now, also, building tools for the Department of Defense. That’s not an accusation. It’s a clarification.

The question isn’t whether to engage with technology that has military applications — it’s almost impossible not to at this point. The internet itself was originally a DARPA project. GPS was built for the military. The question is what level of proximity feels acceptable, and what kinds of applications cross lines that matter to you.

The honest answer about the Pentagon’s AI deals is that they don’t resolve neatly. What the moment calls for isn’t a clean verdict — it’s a serious conversation, inside these companies and outside them, about where the next lines should be drawn. Because in technology, as in most things, the lines you don’t consciously choose tend to get drawn for you.